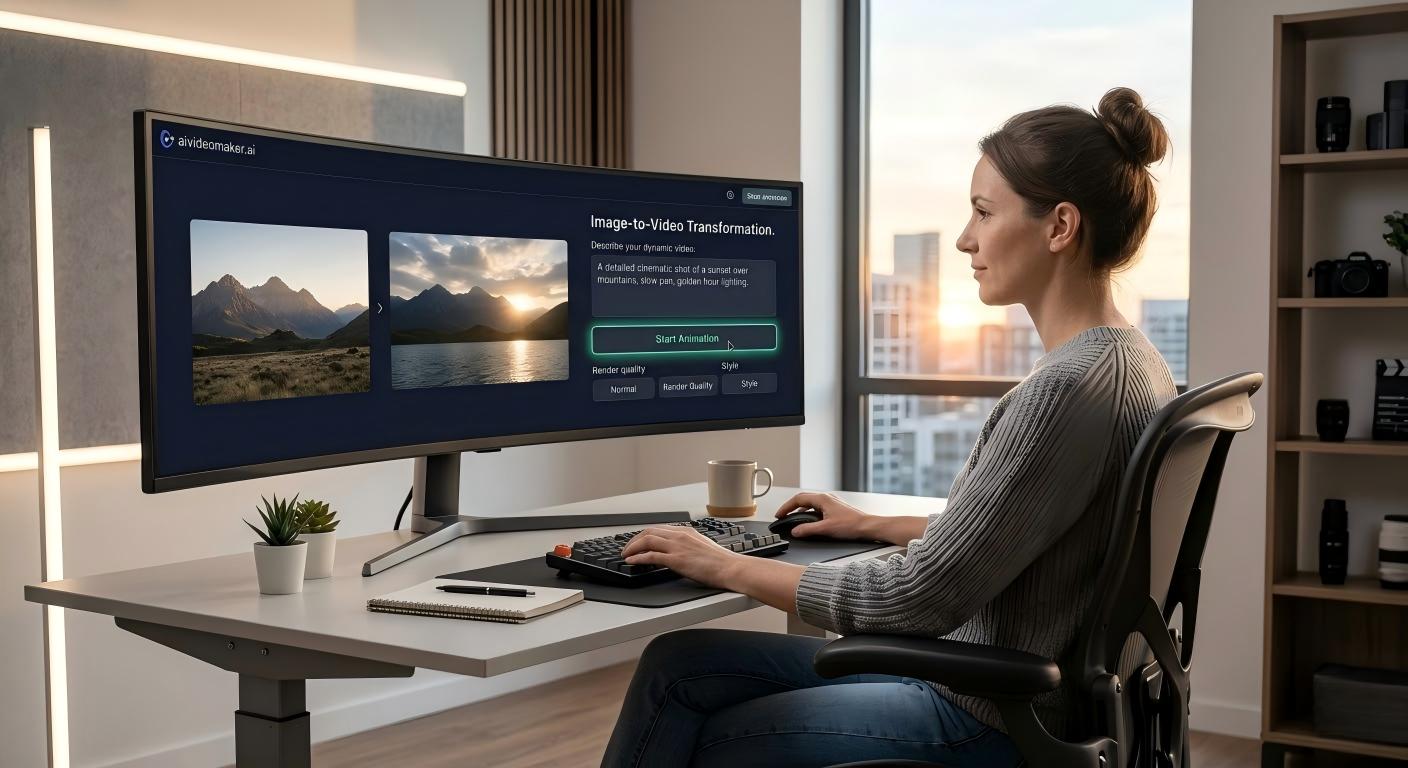

How to Animate Still Photos with an Image to Video AI Maker

How to Animate Still Photos with an Image to Video AI Maker

Introduction

In the fiercely competitive landscape of modern digital advertising and visual content marketing, enterprise divisions are struggling against severe engagement pain points. Consumer attention spans are rapidly decaying, leading to catastrophic plummets in click-through rates (CTR) when campaigns rely solely on traditional, inert media. Standard static images are increasingly ignored by sophisticated social media algorithms, rendering expensive photographic assets functionally invisible. To mathematically counteract this engagement latency and resurrect dormant digital assets, integrating an advanced image to video AI maker into your core production workflow is no longer an experimental luxury---it is a strict operational mandate.

Historically, transforming a static photograph into a dynamic cinematic sequence required painstaking manual keyframing along the Z-axis, complex vector rigging, and astronomical labor costs associated with professional motion graphics designers. This created massive bottlenecks in production throughput, preventing marketing teams from scaling their visual campaigns at the speed of modern internet trends. Today, highly optimized machine learning models have fundamentally obliterated this mechanical bottleneck.

By leveraging complex neural network architectures, organizations can now instantly extrapolate fluid, realistic motion from established static pixel arrays. This profound technological migration allows creators to synthesize high-converting cinematic assets instantly, without requiring specialized GPU hardware or decades of animation experience. In this comprehensive technical guide, we will aggressively deconstruct the physical properties of algorithmic depth mapping, evaluate the strict parameter configurations required for seamless pixel interpolation, and detail exactly how partnering with a premium AI video generator online guarantees unprecedented commercial dominance for your entire visual catalog.

Core Depth Estimation & Kinematic Advantages

To objectively comprehend the structural superiority of an advanced image animation tool, computer vision engineers must deeply analyze the underlying mechanics of monocular depth estimation and frame-to-frame temporal synthesis. The process executed by a state-of-the-art diffusion model extends far beyond a simple two-dimensional warp filter. Initially, the AI generator conducts a rigorous volumetric analysis of the ingested JPG or PNG data packet. By systematically analyzing the illumination gradients, shadow occlusion, and texture densities within the flat image, the neural network mathematically hallucinates a highly detailed, three-dimensional depth map.

Following precise depth segmentation, the algorithm executes strict foreground isolation and background layer separation. The core diffusion engine---such as the highly optimized Wan 2.2 model---then calculates the intended kinematic trajectory. Unlike archaic Lagrangian particle tracking, which frequently results in gelatinous distortion, modern AI models utilize Eulerian motion field prediction. This approach analyzes global pixel velocity vectors to extrapolate mathematically flawless natural motion, perfectly simulating fluid dynamics in water, aerodynamic flow in hair, or rigid-body transformation in architectural rendering.

Furthermore, the AI must overcome the critical challenge of pixel occlusion. When a foreground subject shifts its position along the X or Y axis, it exposes background areas that were previously hidden in the original static photograph. An enterprise-grade AI engine utilizes sophisticated inpainting and pixel hallucination algorithms to instantly generate structurally accurate data to fill these resulting voids. Consequently, the synthesized animation maintains absolute physical consistency and logical perspective through complex spatial distortion, locking the visual data packets perfectly in place while simulating organic cinematic physics.

Critical Market Applications & Real-World Use Cases

The strategic deployment of prompt-driven kinetic synthesis is aggressively dictated by the hyper-accelerated product lifecycles of modern algorithmic commerce. In the relentless arena of digital asset optimization, social media managers frequently confront severe engagement bottlenecks on platforms optimized for constant motion, such as TikTok, Instagram Reels, and YouTube Shorts. Consequently, these operational departments must rapidly iterate, transforming inert product shots into liquid, high-retention video packets within minutes. Furthermore, professional digital artists leverage this exact technology to synthesize highly complex b-roll or atmospheric effects around their static vector illustrations. Therefore, leveraging a high-fidelity static to dynamic video platform allows lean creator teams to compete directly with massive, resource-heavy production studios.

Furthermore, advanced e-commerce managers actively deploy these systems for thumbnail and product listing optimization. Consequently, by feeding standard, static product catalog images into an AI talking photo generator, marketers can generate captivating micro-video hooks that mathematically improve landing page conversion rates. For instance, a flat lay of a cosmetic perfume bottle can be instantly transformed into a dynamic sequence featuring shimmering volumetric lighting and simulated fluid movement. Therefore, the AI mathematically guarantees that the specific chromatic specifications and branding geometry of the product remain locked and chemically consistent through the generated motion.

Consequently, by outsourcing this complex visual data transformation to an automated algorithmic facility, founders can mathematically scale their production throughput. Furthermore, these dynamic assets can be seamlessly integrated into diverse automated marketing channels, including high-converting AI UGC video creator ad campaigns. Therefore, by securing this advanced rendering technology, digital brands permanently isolate their visual identity from inferior competitors who still rely on archaic, motionless media formats.

Comparison Table: Visual Content Production Modalities

To objectively evaluate the structural and financial viability of varying visual synthesis modalities, procurement engineers must critically analyze comparative performance data. The following 4-column table mathematically contrasts Image-to-Video AI Generation against legacy procedural animation techniques.

| Production Modality | Visual Realism & Physics Accuracy | Rendering Velocity & Scale | Software Complexity & Cost |

|---|---|---|---|

| Image to Video AI Maker | Supreme. Diffusion models hallucinate realistic physics conditional to the source data. | Extremely Fast. Renders complex cinematic sequences in minutes. Unlimited scalability. | Minimal. Requires only a simple SaaS subscription. Zero GPU hardware required. |

| Traditional Keyframing (After Effects) | Absolute. Manual control over every vector, but prone to human error in perspective. | Catastrophic. Requires weeks of skilled human labor per second of video. | Astronomical. Demands expensive software licenses, high-end PCs, and skilled salaries. |

| Basic Cinemagraph Apps | Moderate. Uses simple masking to loop isolated parts of an image. | Fast, but heavily restricted in output duration and aesthetic scope. | Low. Cheap mobile apps, but offers zero true 3D spatial extrapolation. |

| Standard GIF Generators | Non-Existent. Relies on simple binary looping of crude data packets. | Moderate. Extremely limited in frame count and visual fidelity. | Terrible. Heavily compressed data creates massive engagement deficits. |

Image Preparation Best Practices & Specs

Executing high-fidelity kinematic synthesis requires absolute adherence to rigorous input data parameters. The raw visual data packet supplied to the neural network acts as the foundational structural seed. If the input image is saturated with heavy JPEG compression artifacts, suboptimal illumination gradients, or severely blurred textures, the AI's monocular depth estimation will fail catastrophically. This directly results in erratic object warping, hallucinatory flickering, and unusable video outputs. Therefore, before initiating the generation sequence, engineers must utilize an advanced AI image editor to systematically de-noise, sharpen, and properly isolate the primary subjects within the data packet.

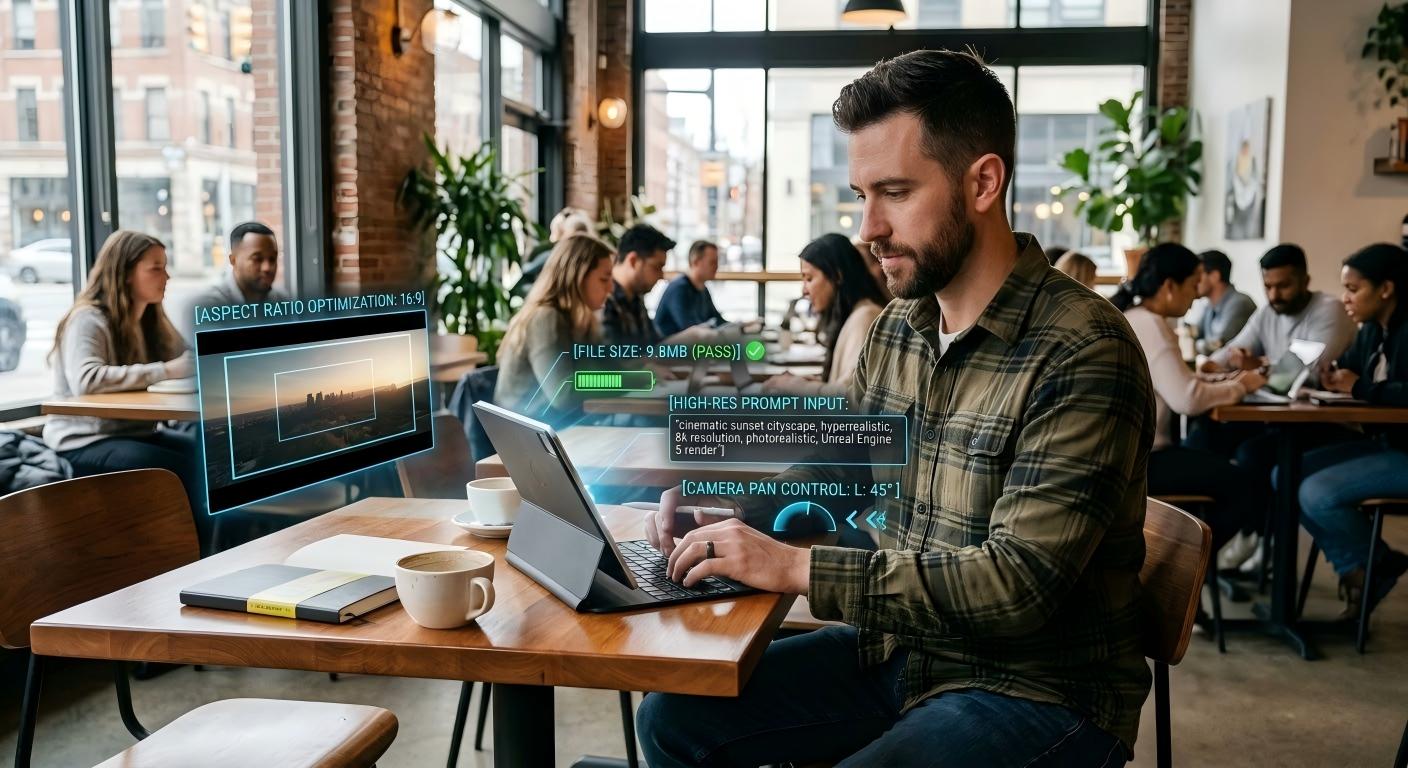

Furthermore, structural parameters dictate that standard data packets must strictly adhere to supported formats---specifically PNG, JPG, or WEBP. The rendering engine mathematically enforces a rigorous saturation limit, typically capping single uploads at 10MB to maintain optimal memory allocation within the Wan 2.2 diffusion model. Attempting to ingest highly uncompressed RAW camera files or specialized HEIC formats will destabilize the input pipeline, resulting in rendering timeouts.

Finally, to maximize creative scalability and visual fidelity, users must supply high-resolution seed images. If you supply a low-resolution thumbnail, the AI is forced to mathematically interpolate massive amounts of missing detail, resulting in a soft-focus, low-quality output. Ensure your assets are optimized with correct aspect ratios conditional to the target distribution channel (e.g., 16:9 for YouTube, 9:16 for vertical Reels) prior to generation. For consistent branding across multiple generated clips, users should integrate specialized AI consistent character generation protocols into their wider asset pipeline.

Frequently Asked Questions (FAQ)

1. What are the definitive file formatting specifications for image ingestion?

According to standard visual manufacturing protocols, the platform strictly permits the ingestion of visual data packets formatted as PNG, JPG, or WEBP. Attempting to upload lossy BMP or specialized RAW files will result in an upload failure. Ensure your unique image files do not exceed the strictly enforced 10MB limit.

2. Why does my generated video sequence only output at 480p standard definition?

To provide Zero-Anxiety operational velocity and infinite creative scalability, standard free drafts are processed at a computationally light 480p resolution. To unlock commercial-grade, mathematically flawless HD data packets, users must access paid tiers or utilize the integrated AI Video Upscaler to interpolate drafts into pristine 720p or 1080p resolution.

3. What are the standard benchmark latencies for rendering an image into a video?

While global server loads fluctuate, the standard rendering throughput for a 5-second cinematic sequence averages approximately 200 seconds per unique generation task. Enterprise divisions requiring massive daily visual volume can secure concurrent job processing capabilities via Premium subscription tiers to drastically reduce overall latency.

4. Does the generator accurately simulate physical properties based on the image?

Yes. Premium models execute complex conditional generation. If the input data features reflective cosmetic liquids, atmospheric smoke, or ocean waves, the neural network calculates and simulates highly realistic refractive indices, fluid dynamics, and particle dispersion, securing visual dominance for highly technical marketing sectors.

5. Can I explicitly command specific camera mechanics like a dolly zoom or a pan?

Currently, the base image-to-video algorithm executes high-fidelity physics extrapolation based on the inherent depth gradients of the input data rather than procedural camera controls. To influence motion, users can supply highly specific text prompts alongside the image (e.g., 'slow cinematic pan to the right') to mathematically guide the diffusion model's trajectory.

6. Are free-tier generated visual assets legally authorized for commercial distribution?

No. Video packets synthesized under the free tier retain a cryptographic watermark, and the legal usage rights are strictly limited to non-commercial, personal drafting. To legally monetize your visual asset portfolio on platforms like YouTube or deliver files for paid client projects, users must upgrade to a commercial subscription plan to ensure absolute legal integrity.

Conclusion

The engineering reality within modern visual content marketing is irrefutable: attempting to capture and retain consumer attention relying purely on inert static imagery guarantees catastrophic engagement latency and massive financial bleed. By migrating your creative supply chain directly to our precision-engineered image to video AI maker facility, you permanently mathematicalize your asset's cinematic quality, Z-axis structural stability, and global market readiness. You guarantee absolute visual dominance by accessing our structurally flawless rendering capabilities, eradicate the threat of algorithm suppression, and unlock unparalleled speed-to-market for your entire visual catalog.

Do not compromise your brand's digital presence with slow, manual animation workflows. Secure your entire visual content supply chain by upgrading your operational infrastructure today. Access the advanced AI Video Maker platform to execute your first high-fidelity transformation, and fundamentally revolutionize your brand's digital marketing trajectory. Explore our complete suite of tools, including the Singing Photo AI, to maximize your creative output.