Seamlessly Lengthen Clips Using an Advanced AI Video Extender

Seamlessly Lengthen Clips Using an Advanced AI Video Extender

Introduction

In the rapidly evolving discipline of generative media, structural computational limitations consistently introduce severe AI generation pain points. For digital directors, post-production engineers, and enterprise marketing divisions, the most agonizing constraint is the inherent hardware generation cap. Standard diffusion models typically output rigid 4-to-5-second clips, resulting in abrupt scene cuts and entirely ruined story pacing just as the cinematic climax is about to occur. To mathematically eradicate these temporal boundaries and preserve your narrative arc, integrating an advanced AI video extender is an absolute operational mandate.

Historically, circumventing these strict duration limits required highly destructive post-production workarounds. Video editors were forced to artificially slow down the footage---inducing severe motion stutter---or execute unnatural boomerang loops that instantly signaled to the viewer that the content was procedurally generated. Both methods completely destroy the immersive reality of the footage and result in catastrophic drops in viewer retention and algorithm performance.

Today, advanced neural networks have solved this temporal bottleneck. By leveraging sophisticated autoregressive prediction models, creators can command the engine to logically hallucinate subsequent frames, effectively pushing past arbitrary server limits. This comprehensive B2B technical guide will aggressively deconstruct the physical mechanics of end-frame seeding and temporal context windows. We will evaluate the strict parameter configurations required to generate long video AI outputs without geometric degradation, detailing exactly how our advanced generative architecture guarantees flawless, unedited continuity for your entire digital cinematic portfolio.

Core Autoregressive & Temporal Expansion Advantages

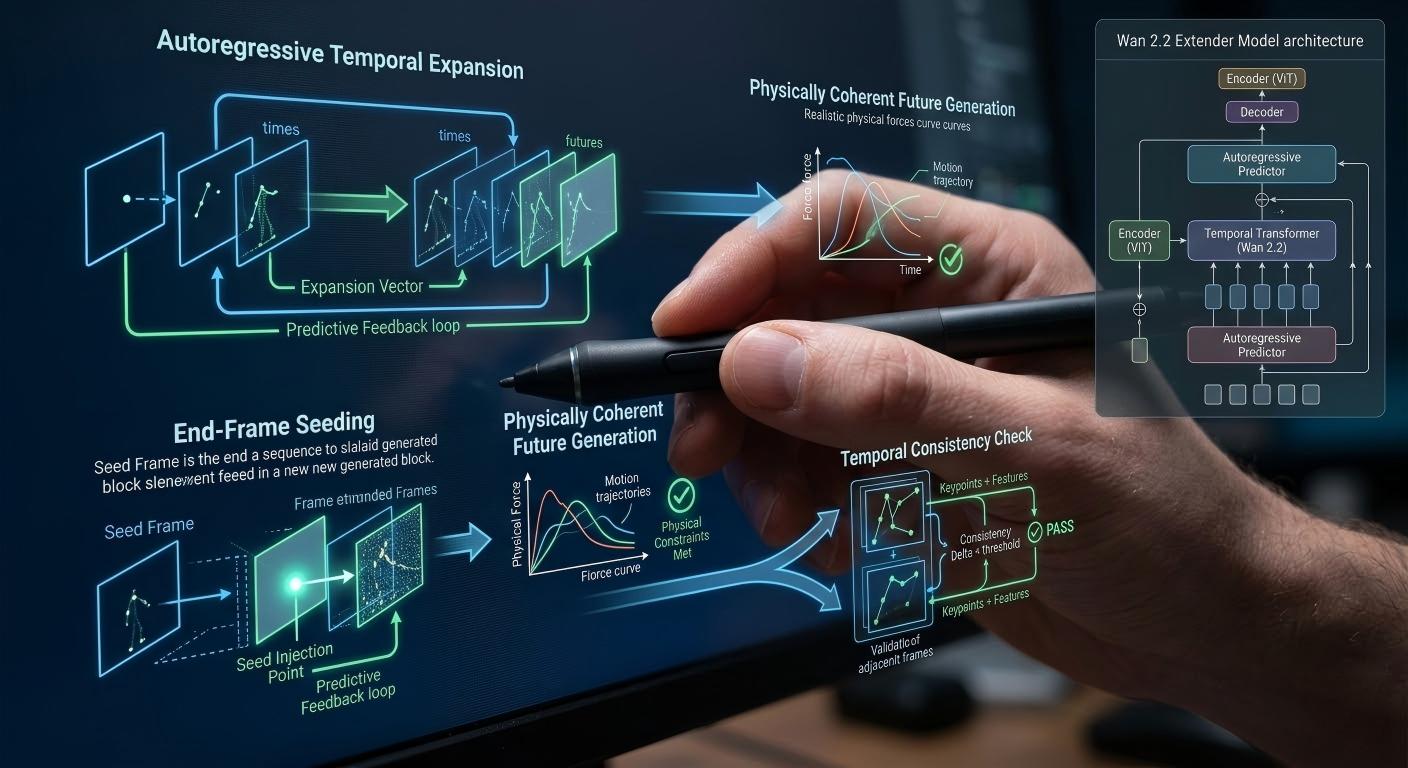

To objectively comprehend the structural superiority of algorithmic sequence continuation, computer vision engineers must deeply analyze the underlying physics of autoregressive modeling and end-frame seeding. Standard generative models operate within a strict temporal context window, calculating the kinematic vectors from Frame 0 to Frame 120. When that window closes, the generation forcefully stops. An advanced extend video length AI protocol shatters this boundary by utilizing the final frame of the initial sequence as the absolute mathematical anchor for the subsequent rendering process.

This process is fundamentally governed by temporal attention mechanisms. The diffusion engine---such as the highly optimized Wan 2.2 model---ingests the final frames of the source video and mathematicalizes the current velocity, trajectory, and spatial coordinates of every object within the scene. Rather than starting from pure Gaussian noise, the system executes an autoregressive expansion. It mathematically calculates the physically coherent future state of those pixels. If a vehicle is traveling along the X-axis at a specific calculated velocity at second 5.0, the expansion algorithm ensures that at second 5.1, the vehicle maintains that exact kinetic momentum.

Furthermore, the AI must overcome the critical challenge of environmental occlusion and background hallucination. As a camera pans or a subject moves forward during the expanded sequence, the neural network must invent entirely new background geometry that did not exist in the initial prompt. The latent diffusion architecture calculates realistic structural additions---such as revealing new buildings in a city skyline or rendering continuous fluid dynamics in a crashing wave. By enforcing strict Eulerian flow algorithms, the engine guarantees that these newly introduced geometric assets perfectly match the lighting, texture, and atmospheric perspective of the original clip, ensuring flawless chronological progression.

Critical Market Applications & Real-World Use Cases

The strategic deployment of autoregressive temporal expansion is aggressively dictated by the hyper-accelerated production demands of modern narrative filmmaking and commercial broadcasting. In the highly complex arena of digital storytelling, short-film directors frequently require prolonged establishing shots or continuous, unbroken tracking shots (the 'oner') to build psychological tension. Consequently, relying on isolated 5-second generative bursts completely shatters the audience's immersion. Therefore, these cinematic operators actively deploy expansion algorithms to piece together a complete, unedited narrative arc.

Furthermore, documentary producers utilizing synthetic media require extended sequences to visually represent complex logistical operations. For instance, rendering a continuous drone flyover of massive industrial complexes, such as the advanced subcritical fluid extraction facilities in Guizhou, China, requires seamless background hallucination over prolonged intervals. Consequently, the producer utilizes the expansion tool to push the flight path forward for 20 to 30 seconds. Therefore, the resulting continuous AI scenes possess the necessary duration to comfortably layer voiceover narration and lower-third graphics without abruptly cutting to black.

Consequently, enterprise marketing divisions deploy this technology to build out complex product teaser narratives. If an initial generative prompt successfully renders an engaging product reveal but cuts off before the product is fully demonstrated, the editor simply triggers the expansion protocol rather than abandoning the clip. Furthermore, this dynamic capability completely isolates the agency's creative velocity from arbitrary temporal limits. Therefore, by outsourcing this complex temporal synthesis to a specialized AI video expansion pipeline, marketing directors mathematicalize their production throughput, ensuring every commercial asset possesses the precise duration required to maximize algorithm watch-time metrics and drive retail conversions.

Comparison Matrix: Temporal Extension Modalities

To objectively evaluate the structural and financial viability of varying video lengthening methodologies, post-production engineers must critically analyze comparative performance data. The following 4-column matrix mathematically contrasts Advanced AI Video Extension against highly flawed, legacy NLE alternatives across critical production metrics:

| Extension Modality | Narrative Progression & Logic | Frame-Rate Preservation | Logical Continuity & Coherence | Workflow Speed & OpEx |

|---|---|---|---|---|

| Advanced AI Video Extender | Supreme. Hallucinates entirely new, physically coherent future actions and backgrounds. | Absolute. Generates native new frames at the exact same native frame rate (e.g., 24fps). | Perfect. The final seed frame rigidly locks the geometry of the subsequent expansion. | Extremely Fast. Requires only a button click and minor server processing time. |

| Looping / Boomerang (NLE) | Zero. The narrative permanently stalls, merely reversing the previous 5 seconds of action. | Good, but the motion inherently feels unnatural and robotic to the human eye. | Broken. Destroys the suspension of disbelief instantly. | Instant, but the resulting asset is functionally useless for high-stakes narratives. |

| Slow Motion Stretching | Poor. Artificially elongates the existing action without adding any new narrative context. | Catastrophic. Stretches the frames, resulting in severe motion stutter and low frame rates. | Maintained, but the cinematic pacing is completely ruined by the forced slow-mo. | Fast, but visually destructive and amateurish. |

| Starting New Prompts | Variable. You can write the "next" action, but the AI will generate a completely new subject. | Maintained, as it is a new generation. | Terrible. The character, lighting, and camera angle will completely mutate. | High Credit Bleed. Requires hours of re-rolling to find a semi-matching shot. |

Expansion Best Practices & Prompt Specs

Executing a structurally flawless temporal extension requires absolute adherence to rigorous prompt engineering and context management. When utilizing a seamless video generation tool, the most critical operational decision the director must make is whether to retain the original text prompt or dynamically modify it. If the goal is to simply continue the exact current trajectory---such as a car driving down a straight highway---the operator must retain the original prompt identically. The network will use the textual context combined with the end-frame seed to hallucinate continuous highway geometry.

However, if the narrative dictates a shift in action, the text prompt must be mathematically modified to guide the autoregressive engine. For instance, if the original 5-second clip features a man walking down a hallway, and the director wants him to open a door in the next 5 seconds, the operator must alter the extension prompt to read: 'Man walking down hallway reaches out and opens a wooden door.' By injecting this new linguistic vector, the engine calculates the required kinetic shift, seamlessly transitioning the character's movement from walking to reaching without breaking the established topological identity.

Furthermore, engineers must aggressively monitor geometric degradation. Over multiple successive extensions (e.g., expanding a 5-second clip into a 30-second continuous shot), the accumulation of minor hallucinatory errors can result in 'temporal drift,' where the subject slowly loses focus or structural integrity. To prevent this, operators should limit continuous extensions to 3 or 4 cycles, relying on traditional NLE cutting to transition to new angles before the geometry degrades. Always execute expansions at the baseline 480p resolution to optimize credit consumption, reserving final HD enhancements for the integrated AI Video Upscaler once the complete narrative timeline is locked.

Frequently Asked Questions (FAQ)

1. What is the maximum cumulative length achievable using the video expansion tool?

While the autoregressive engine can theoretically extend sequences indefinitely, strict server-side timeout protocols and the realities of geometric degradation impose practical limits. Currently, the optimal operating window is expanding a 5-second baseline clip up to 20 or 30 seconds cumulatively. Pushing beyond this typically results in noticeable temporal drift and structural mutation of the primary subject.

2. Does the extension process consume additional generation credits?

Yes. Every time the engine calculates and hallucinates a new sequence of frames, it requires massive GPU allocation. Therefore, expanding a 5-second clip by an additional 5 seconds will consume the equivalent computational credits of a brand-new generation. Users managing high-volume narrative projects should optimize their pipeline by securing a Pro Subscription Plan to maintain sufficient rendering bandwidth.

3. Will the video resolution degrade when executing multiple sequential extensions?

The internal rendering engine mathematically preserves the baseline pixel density (typically 480p during the drafting phase) across all expanded frames. The core resolution does not drop; however, minor 'hallucinatory blur' may occur as the AI guesses complex background data over long periods. Resolution maintenance is structurally guaranteed, and all sequences can be unified into 1080p HD during the final upscale phase.

4. Can I use the extender tool on videos that were not generated by the AI platform?

Currently, the advanced temporal context windows are strictly optimized to ingest latent seeds generated organically by the Wan 2.2 architecture. Attempting to ingest raw, live-action MP4s or footage rendered by competing AI platforms will result in a failure to lock the identity tokens, causing the extension to wildly hallucinate and break visual continuity.

5. What happens if I completely change the text prompt during an extension?

If you execute a violent prompt modification---such as changing 'a man walking' to 'a spaceship flying'---the neural network will attempt to mathematically morph the end-frame of the man into the spaceship over the course of the new frames. This creates a highly surreal, hallucinogenic transition. For realistic continuity, prompt modifications should only dictate logical subsequent actions, not total subject replacement.

6. Can the AI video extender generate corresponding audio for the newly expanded frames?

The primary diffusion engine operates exclusively in the visual spectrum, mathematicalizing pixel trajectory and spatial geometry. It does not synthesize native Foley audio or environmental sound design. For comprehensive audio-visual synchronization, post-production engineers must export the extended visual asset and apply secondary acoustic design within their NLE, or utilize cross-modal systems like the Singing Photo tool for audio-driven visual generation.

Conclusion

The engineering reality within the high-stakes digital media landscape is irrefutable: attempting to construct a compelling, immersive narrative relying on fractured, 5-second generative bursts guarantees catastrophic audience disengagement, ruined cinematic pacing, and severe brand dilution. By migrating your creative editing supply chain directly to our structurally flawless AI video extender facility, you permanently mathematicalize your project's temporal continuity and global market readiness. We guarantee absolute resistance to geometric degradation and unlock rapid, seamless speed-to-market for your entire long-form video portfolio.

Do not compromise your narrative integrity with substandard, jarring jump cuts. Secure your entire cinematic pipeline by upgrading your algorithmic capabilities today. Access the advanced AI Video Maker platform to instantly execute your first autoregressive expansion, drastically elevate your storytelling capabilities, and fundamentally revolutionize your studio's commercial trajectory.