Scale Your Marketing Fast: The Complete Guide to AI UGC Video Creation

Scale Your Marketing Fast: The Complete Guide to AI UGC Video Creation

Introduction

Navigating the highly volatile landscape of modern performance marketing requires absolute visual fluency and unprecedented content volume. For e-commerce founders and digital agencies, attempting to scale visual creatives purely with human talent is a mathematical impossibility; it introduces severe operational bottlenecks that instantly paralyze growth. Consequently, deploying an advanced AI UGC video creator is no longer a speculative technological venture; it is an absolute engineering mandate for Return on Ad Spend (ROAS) optimization. The contemporary digital advertising ecosystem is massively oversaturated, and consumers have developed a profound physiological desensitization to traditional, high-gloss studio aesthetics. This algorithmic desensitization directly results in massive ad fatigue and plummeted click-through rates (CTR) across major distribution networks.

Historically, the standard operational procedure for generating User Generated Content (UGC) style ad videos involved astronomical live-action acquisition costs. Agencies suffered through high actor appearance fees, exorbitant studio rental overhead, agonizing script-revision cycles, and the crippling mechanical gridlock of managing physical production schedules. If an actor mispronounced a critical hook or the lighting failed, the entire financial investment was eradicated, requiring an expensive reshoot.

By migrating from manual asset creation to automated digital asset synthesis, modern founders can mathematicalize their production throughput, ensuring infinite creative scalability. This comprehensive B2B engineering guide aggressively deconstructs the mechanical physics of visual authenticity replication. We will deeply evaluate the strict parameter configurations required for optimal neural rendering, examine the thermodynamic advantages of automated concurrent processing, and detail exactly how partnering with an elite AI video maker permanently guarantees unprecedented speed-to-market for your entire visual asset portfolio.

Core Behavioral Mapping & Authenticity Advantages

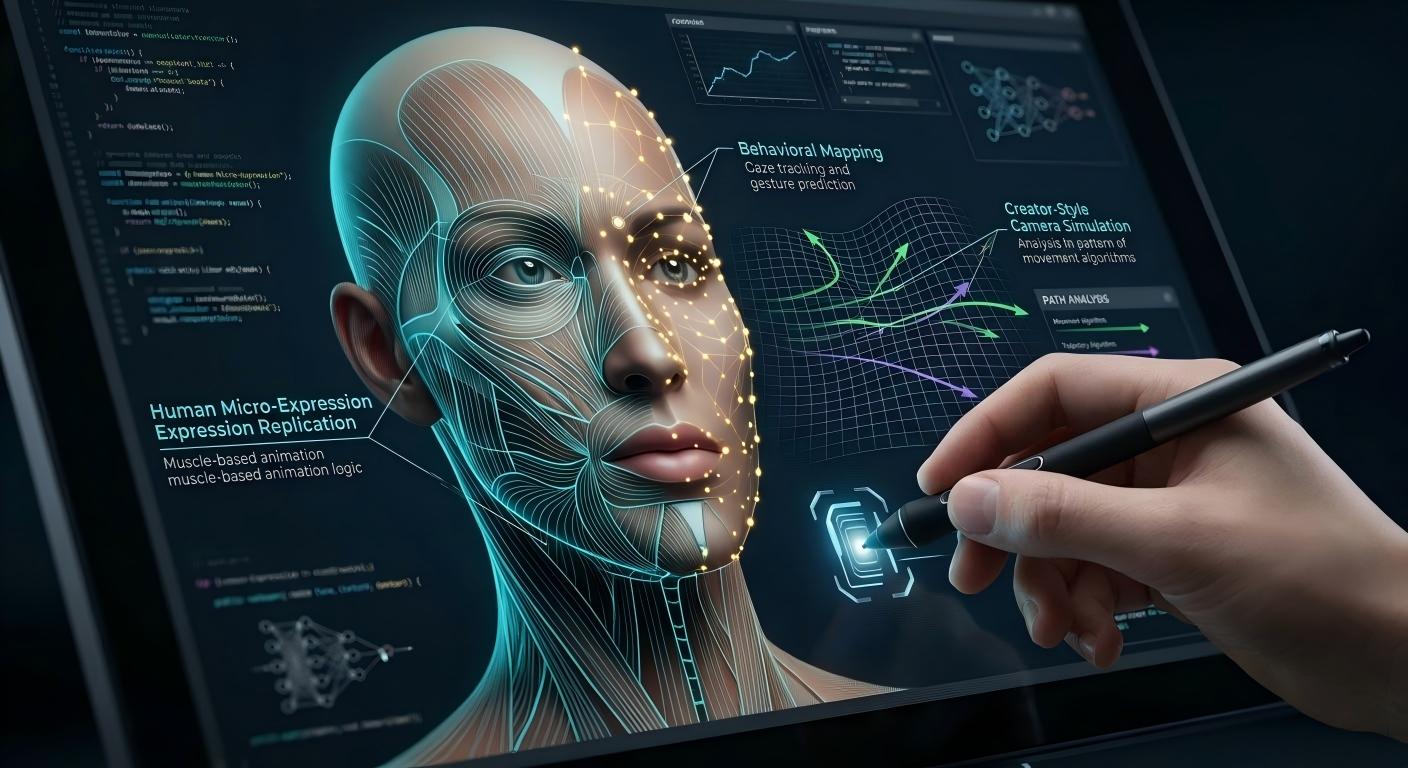

To objectively comprehend the structural superiority of an advanced UGC style ad videos generator, computing engineers must deeply analyze the specific algorithms dictating behavioral mapping and Natural Language Processing (NLP) integration. The engine does not apply a superficial facial filter; rather, it performs a complete topological reconstruction of human behavior within a closed latent space. Initially, specialized machine learning models execute rigid monocular depth estimation and facial landmark regression. By analyzing thousands of high-resolution human visual data packets, the AI generator mathematicalizes precise three-dimensional topology---locking every micro-expression, including precise mouth movement synchronization (viseme-to-phoneme mapping) and rapid eye movement protocols.

Following structural mapping, the AI utilizes Eulerian kinematic algorithms to synthetically generate 'creator-style' camera physics. True authenticity---the physiological trigger that successfully suppresses a consumer's ad-fatigue response---relies purely on imperfect mechanical movement. The system mathematically simulates slight selfie-angle perspective distortion, natural handheld camera jitter, and subtle focal breathing. Furthermore, the underlying Wan 2.2 diffusion model architecture mathematicalizes optimal temporal consistency. Consequently, when the system calculates foreground displacement, it simultaneously generates structurally accurate background data packets, permanently eradicating the threat of geometry distortion and hallucinatory flickering commonly associated with rudimentary motion applications.

Equally critical is the mechanical integration with advanced spectrogram-based text-to-speech (TTS) models. Premium systems replicate authentic human prosody---the precise mathematical cadence, vocal fry, pitch modulation, and breathing patterns that signal psychological trustworthiness during a high-stakes performance marketing pitch. By mathematicalizing this highly complex behavioral network, the engineering process guarantees a structurally flawless virtual presenter. This ensures the avatar delivers the psychological impact of a native human creator while possessing the limitless stamina and perfect script compliance of an algorithmic entity.

Critical Market Applications & Real-World Use Cases

The strategic deployment of prompt-driven user generated content AI is aggressively dictated by the hyper-accelerated lifecycles of modern digital marketing architectures. In the competitive environment of digital asset optimization, performance marketing videos require continuous, algorithmic A/B testing of visual hooks to capture peak consumer engagement metrics. Consequently, e-commerce managers utilizing manual creative pipelines face severe logistical bottlenecks. Attempting to manually test 50 different avatar variations, background environments, and language tracks using human labor guarantees catastrophic campaign delays. Furthermore, the inherent variability of human performance introduces uncontrolled deviations into the testing data, completely compromising the statistical significance of the ad set.

Therefore, advanced digital operators partner with a specialized SaaS facility to execute automated asset synthesis. Consequently, if a brand requires an aggressive, multi-lingual roll-out using a TikTok ad generator, they utilize the platform's advanced concurrency matrix. Furthermore, the marketing team can adapt these synthesized assets to diverse demographic profiles instantly. For instance, they can deploy a Gen-Z avatar for Instagram Reels and immediately regenerate the exact same script using a mature professional avatar for LinkedIn. Therefore, this proprietary distribution model legally isolates the brand's creative velocity from inferior competitors who rely on slow, inert media formats. Consequently, this secures continuous, high-margin retail revenue by ensuring the ad account is constantly refreshed with novel stimuli.

Furthermore, by utilizing our certified concurrent rendering infrastructure, marketing departments can execute mathematically flawless, multi-variate A/B testing instantly. Therefore, should a rapid market surge dictate an immediate pivot to a new product angle, the operator utilizes the specialized convert text to video infrastructure to generate a new baseline avatar array within minutes. Consequently, the agency utilizes this specialized data packet to mathematicalize their production throughput. Therefore, the brand can dominate niche markets by permanently adapting their visual catalog without ever incurring massive capital expenditures for live-action reshoots.

Comparison Matrix: Creative Manufacturing Modalities

To objectively evaluate the structural and financial viability of varying visual content manufacturing modalities, procurement engineers must critically analyze comparative performance data. The following matrix contrasts specialized AI synthesis against highly flawed legacy industry alternatives across critical performance metrics:

| Manufacturing Modality | Cost Per Unit (CPA Impact) | Render Velocity & Turnaround | A/B Testing Compatibility & Scalability |

|---|---|---|---|

| AI UGC Video Creator | Near-Zero. Requires minimal monthly SaaS subscription; dramatically lowers CPA. | Instant (Minutes). Cloud rendering allows thousands of concurrent units. | Unlimited. Every visual variable (voice, face, background) can be mathematicalized. |

| Hiring Freelance Creators | Terrible. Astronomically high fees per unique video; impossible to scale profitably. | Catastrophic. Requires weeks for shipping products, filming, and manual editing. | Severe bottlenecks. Each new script variable requires expensive physical reshoots. |

| Influencer Agencies | Severe Bleed. Massive management overhead, negotiations, and commission markups. | Slow. Multiple communication loops, legal reviews, and strict contract requirements. | Terrible. Limited compliance with strict performance-focused scripts; creators ad-lib. |

| Traditional Studio Shoots | Astronomical. Demands high-end camera hardware, location rental, and labor OpEx. | Non-existent for agile marketing. Logistical turnaround is measured in months. | Zero compatibility. Rapid iteration based on daily ad data is mathematically impossible. |

Scripting Best Practices & Integration Specs

Executing a structurally flawless visual asset portfolio requires absolute adherence to rigorous syntactic and structural parameters within the AI generation interface. The most critical operational parameter is the mathematical construction of the 'hook script'. Unlike standard broadcast copy, high-stakes performance marketing requires dynamic pattern interrupts---highly active visual and auditory cues executed strictly within the first 3 seconds of playback to suppress the consumer's ad-fatigue scrolling response. By explicitly coding aggressive hooks into the TTS engine, the avatar commands immediate psychological attention.

Furthermore, structural parameters dictate that supplemental visual assets must strictly adhere to optimal formatting guidelines. When integrating product shots via the AI product avatar feature, users must supply lossless PNG files with transparent backgrounds to ensure perfect spatial composition. Supplying high-resolution prompts guarantees the diffusion model has sufficient pixel data to generate structurally accurate environmental arrays, perfectly embedding the product alongside the virtual human.

To optimize visual throughput and manage financial overhead, developers must carefully calculate their script lengths to manage credits per second rendered. Attempting to process a 10-minute continuous monologue in a single generation block can destabilize the rendering queue and exhaust credit reserves. Best practice dictates breaking long-form sales letters into modular, 30-to-60-second semantic blocks. Finally, for complementary visual integrity across multiple modular scenes, engineers should integrate specialized character consistency technology to secure visual inventory geometry through complex narrative distortion.

Frequently Asked Questions (FAQ)

1. How do I legally secure unconditional commercial usage rights for the AI-generated UGC assets?

According to standardized platform guidelines, free-tier generations synthesized under the basic machine learning layer contain cryptographic watermarks, and legal usage rights are strictly limited to personal, non-commercial drafting. Consequently, to legally mathematicalize your visual asset portfolio for commercial distribution, monetization on TikTok, or paid client delivery, users must upgrade to a Premium or Pro subscription plan, which automatically unblocks watermark-free rendering and grants unconditional commercial usage rights.

2. Is the generated UGC content compatible with strict Meta (Facebook/Instagram) ad platform policies?

Yes. Standard mass-market AI models generate significant hallucinatory flickering and topological shifts---key indicators of synthesized media that frequently trigger Meta's automated ad suppression algorithms. Our premium generation layers are powered by Wan 2.2, a state-of-the-art diffusion architecture specializing in high-fidelity temporal consistency. This smoothness drastically reduces the uncanny valley effect, guaranteeing unparalleled speed-to-market without triggering algorithmic shadow-bans.

3. Why does my generated video sequence only show a 480p standard definition resolution?

To mathematicalize low server ROAS and provide unlimited creative scalability for free users, standard drafting is processed at a computationally light 480p resolution. To achieve the commercial-grade HD resolution demanded by modern high-stakes marketing, operators must either utilize a paid concurrent processing matrix or utilize the integrated AI Video Upscaler to mathematicalize the final pixel data packets into pristine 720p or 1080p HD inventory.

4. What are the strictly enforced concurrency and rendering limits for high-stakes marketing?

Platform protocols require precise computational weight management. Free tier users are restricted to sequential rendering to prevent server destabilization. By upgrading to Pro tiers, concurrency limits fluctuate conditional to your plan, typically allowing multiple simultaneous generation nodes. This ensures supreme model stability while allowing agencies to process massive A/B testing batches simultaneously.

5. Can I supply my own specialized visual raw materials, such as custom avatars or voices?

Absolutely. The architecture mathematicalizes a highly customizable supply chain. While standard mass-market generators provide archaic, limited stock libraries, our advanced facility allows you to mathematicalize the production throughput by supplying lossless PNG character anchors and spectrogram-based high-fidelity MP4 audio data packets for precise voice cloning, ensuring your brand maintains 100% unique proprietary assets.

6. Does the platform execute quality control on the final pixel data packets?

Yes. The internal algorithmic laboratory intercepts the generated MP4 data packets to conduct rigorous sub-pixel topographic analysis. This permanently neutralizes visual artifacts associated with lossy compression, ensuring the avatar's lip-sync and micro-expressions remain clinically accurate prior to export. Start creating today using our specialized online video generator to completely mathematicalize your ad ROAS.

Conclusion

The engineering reality within the high-stakes digital advertising landscape is irrefutable: attempting to scale a modern cosmetic or e-commerce empire relying on a manual, human-dependent creative manufacturing pipeline guarantees catastrophic operational failure, severe ROAS bleed, and ultimate brand stagnation. Your commercial dominance requires absolute adherence to high-volume, hyper-iterative testing. By migrating your brand's creative supply chain directly to our structurally flawless AI talking photo generator and UGC facility, you permanently mathematicalize your product's market readiness. We guarantee absolute resistance to ad fatigue and unlock rapid speed-to-market for your entire visual catalog.

Do not compromise your brand's operational survival with substandard, expensive visual assets. Secure your entire digital video supply chain by upgrading your algorithmic capabilities today. Access the advanced AI UGC video creator platform to instantly mathematicalize your creative output, drastically lower your Cost Per Acquisition (CPA), and fundamentally revolutionize your global performance marketing trajectory.