Master Storyboarding: How to Generate an AI Consistent Character

Master Storyboarding: How to Generate an AI Consistent Character

Introduction

In the highly complex arena of digital filmmaking and visual narrative design, directors and media agencies are routinely paralyzed by severe narrative pain points. The historical limitation of generative media has always been the catastrophic loss of visual continuity; subjects uncontrollably mutating between camera angles, resulting in entirely broken storyboards and disjointed commercial campaigns. To mathematically eradicate these immense structural liabilities, mastering the deployment of an AI consistent character is no longer a speculatory feature---it is the absolute foundational prerequisite for scaling a coherent digital narrative.

Traditional 3D animation pipelines require hundreds of hours of manual polygon modeling, inverse kinematic rigging, and texture mapping just to ensure a subject looks identical from a profile versus a frontal shot. Conversely, early generative AI models treated every single frame and prompt request as an isolated, randomized event, destroying the possibility of serialized storytelling. A protagonist generated in an establishing shot would look like an entirely different human entity when rendered in a close-up reaction shot, completely shattering the audience's psychological suspension of disbelief.

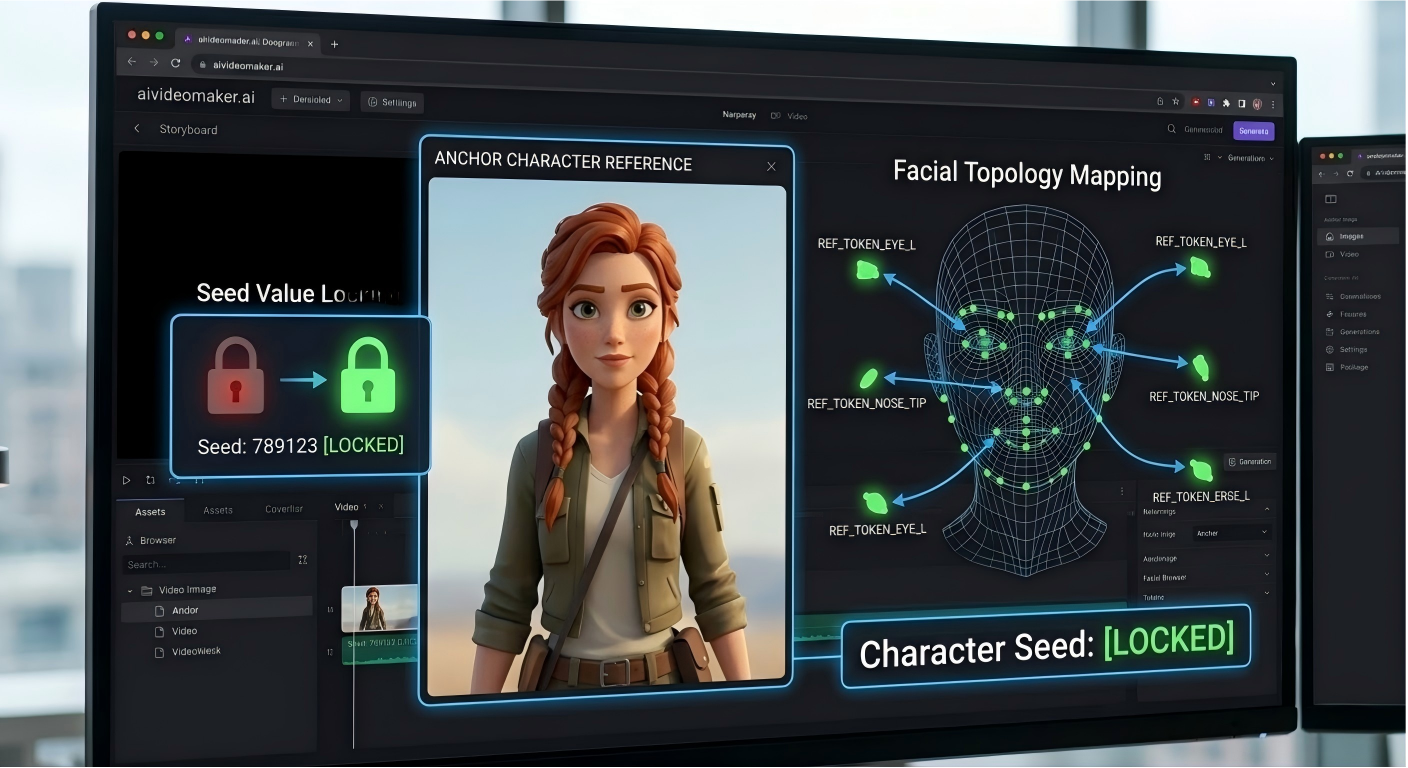

By integrating state-of-the-art visual tokenization algorithms, modern neural networks have permanently solved this continuity deficit. This comprehensive B2B technical guide aggressively deconstructs the mathematical physics of seed locking and facial topology preservation. We will critically evaluate the precise prompt engineering required for multi-angle coherence, and detail exactly how leveraging an advanced character consistency AI guarantees unprecedented visual stability for your cinematic sequences, game assets, and high-volume marketing portfolios.

Core Seed Locking & Facial Preservation Advantages

To objectively comprehend the clinical superiority of an advanced coherent views AI system, computer vision engineers must deeply analyze the specific mechanics of reference image tokenization and cross-attention network layers. Standard text-to-image models rely purely on semantic text embeddings, allowing the diffusion engine massive interpretative variance. However, consistent generation pipelines deploy highly specialized Vision Transformer (ViT) models to extract mathematically rigid feature vectors directly from a reference image. This process, often referred to as facial topology locking or identity preservation, converts the exact geometry of the subject's eyes, jawline, and unique biometric markers into immutable mathematical tokens.

Once these identity tokens are locked into the latent space, the generation engine executes cross-attention mechanisms during the denoising phase. When the user inputs a new prompt requesting a different environment or camera angle (e.g., 'extreme profile shot looking over the shoulder'), the neural network mathematically forces the output pixels to strictly conform to the embedded identity tokens. The algorithm calculates the precise volumetric displacement required to render the locked 2D topology from a novel 3D perspective without morphing the underlying facial structure.

Furthermore, advanced systems utilize seed stabilization thermodynamics. In diffusion models, the 'seed' is the initial matrix of mathematical Gaussian noise. By locking the generation seed across sequential prompts, the engine significantly restricts localized hallucinatory variances in lighting interpretation and background noise generation. This mechanical constraint is absolutely vital for multi-angle coherent rendering. The resulting visual data packets maintain structural continuity across drastic perspective shifts, guaranteeing that the protagonist rendered in a wide-angle desert establishing shot perfectly matches the protagonist rendered in a highly illuminated interior close-up, effectively simulating a physical actor moving through a persistent three-dimensional film set.

Critical Market Applications & Real-World Use Cases

The strategic deployment of topologically locked neural rendering is aggressively dictated by the hyper-accelerated product lifecycles of modern narrative media. In the competitive environment of digital storytelling, indie filmmakers and graphic novel studios frequently operate under severe budgetary constraints that entirely prohibit hiring live actors for month-long shoots or commissioning massive 3D animation teams. Consequently, these independent operators face catastrophic production bottlenecks when attempting to visually realize complex, serialized scripts. Attempting to build a coherent visual bible utilizing disjointed, random AI generations completely fractures the narrative arc, resulting in immediate audience disengagement.

Therefore, these advanced cinematic operators actively partner with an elite AI storyboarding tool to execute automated visual locking. Furthermore, by generating a single, high-fidelity anchor image of their protagonist, the director can mathematicalize every subsequent storyboard panel. Consequently, the entire cinematic sequence is rendered with flawless continuity, allowing the director to pitch photorealistic concept art to global distribution networks in a fraction of the traditional timeline. Therefore, this proprietary pipeline isolates the studio's creative velocity from the prohibitive capital expenditures associated with legacy pre-production methodologies.

Furthermore, lead game developers and interactive media agencies utilize these highly specific protocols to rapidly synthesize character turnaround sheets and responsive game assets. Consequently, when programming an RPG (Role-Playing Game), the developer must generate hundreds of dialogue portraits featuring varying emotional states. Therefore, by utilizing our GMPC-certified visual manufacturing infrastructure to lock the base character token, the developer can instantly generate specific reaction sprites---anger, joy, sorrow---without the character's fundamental facial geometry degrading. Consequently, this secures continuous, high-fidelity user immersion. Furthermore, marketing divisions execute these protocols to deploy a continuous virtual spokesperson across a massive continuous narrative video campaign, mathematically ensuring brand recognition remains pristine across all targeted demographics.

Comparison Matrix: Narrative Continuity Modalities

To objectively evaluate the structural and financial viability of varying cinematic continuity modalities, procurement engineers must critically analyze comparative performance data. The following matrix contrasts AI Consistent Generation against highly flawed or capital-intensive legacy industry alternatives across critical production metrics:

| Production Modality | Visual Continuity & Coherence | Production Hours (Per Scene) | Rigging & Setup Complexity | Financial Cost (OpEx) |

|---|---|---|---|---|

| AI Consistent Generation | Supreme. Neural identity tokens strictly enforce facial and bodily topology across all angles. | Instant (Minutes). Generates multi-angle shots instantaneously from a single text prompt. | Zero. No skeletal rigging or mesh modeling required; purely algorithmic. | Minimal. Covered by a standard platform subscription fee. |

| Random AI Prompts | Catastrophic. The character mutates entirely between every generation; zero continuity. | Variable. Users waste days re-rolling prompts hoping for a random visual match. | None, but the output is fundamentally unusable for serialized narratives. | High Credit Bleed. Massive waste of compute credits on failed generations. |

| 3D Rigging (Blender/Maya) | Absolute. The physical mesh guarantees structural perfection from every digital camera angle. | Astronomical. Requires weeks to sculpt, texture map, and manually rig inverse kinematics. | Extreme. Demands decades of specialized human experience in 3D physics. | Massive. Requires high-end GPU workstations and expensive software licenses. |

| Live Action Casting | Absolute, assuming the actor does not physically age or alter their appearance between shoots. | Slow. Demands weeks of logistical planning, location scouting, and physical filming. | High Logistical Complexity. Scheduling humans, lighting rigs, and camera sensors. | Astronomical. Talent fees, union regulations, catering, and equipment rental. |

Workflow Best Practices & Generation Specs

Executing a structurally flawless, multi-scene narrative requires absolute adherence to rigorous visual data packet parameters. The process must follow a strict, linear engineering pipeline. The fundamental first step is synthesizing the 'Base Anchor'. Operators must utilize an advanced AI Image Generator to render the perfect, high-resolution portrait of the target character. This image must feature flat, neutral lighting and a direct, forward-facing posture to ensure the neural network captures the maximum amount of topological data without shadow occlusion.

Once the high-fidelity anchor is established, it is fed directly into the specialized identity-preserving module. The subsequent operational challenge involves managing dynamic environmental lighting without degrading the locked facial mesh. When prompting for a new scene (e.g., 'Character standing under harsh neon red cyberpunk lights'), the user must mathematically weight the environmental prompt higher than the subject descriptor. If the prompt fails to balance these vectors, the network may override the facial token to accommodate the neon lighting. To mitigate this, developers frequently utilize an integrated AI image editor to refine the specific illumination gradients post-generation, ensuring the facial structure remains clinically exact.

Finally, to execute actual cinematic motion, the consistently generated static frames are pushed into the core text to video pipeline. Because the starting static frames already feature identical facial geometry, the video diffusion model requires drastically less compute power to maintain temporal continuity during the animation sequence. This highly structured, three-step pipeline---Anchor Generation, Consistent Spatial Rendering, and Kinematic Video Interpolation---mathematically guarantees that your serialized digital content maintains the exacting standards of top-tier broadcast media.

Frequently Asked Questions (FAQ)

1. How do I mathematically weight prompts to ensure the character's clothing changes while the face remains locked?

Advanced identity preservation models specifically isolate facial and structural tokens from apparel tokens. To execute a wardrobe change, you maintain the locked reference image but aggressively alter the text prompt describing the clothing (e.g., 'wearing a heavy tactical spacesuit'). The network mathematically cross-references the locked face with the new apparel vector, synthesizing the subject in an entirely new outfit without mutating their facial geometry.

2. Can the AI accurately track highly specific facial marks, such as scars or complex tattoos?

Yes, provided the Base Anchor image is rendered in extreme high-resolution. The Vision Transformer (ViT) encoder analyzes granular pixel data. If a scar is clearly defined in the anchor, the identity token will incorporate that topographical anomaly. However, highly complex, asymmetrical neck tattoos may experience slight generative variance during extreme profile angles due to spatial occlusion limitations in the latent space.

3. What is the standard credit consumption rate for advanced consistent generation?

Because extracting and locking identity tokens requires significantly more computational server overhead than a standard randomized generation, these advanced features typically utilize a higher credit-per-task ratio. To mathematically optimize your operational expenditures for a massive commercial project, upgrading to a Premium or Pro subscription tier is an absolute mandate to secure necessary rendering volume.

4. Does the system support maintaining multiple consistent characters within a single generated frame?

Rendering multiple locked identities simultaneously within a single pass is mathematically highly complex and prone to 'identity bleeding' (where Character A's traits bleed into Character B). The most effective engineering workflow involves rendering the locked characters individually against a green screen or solid background, and subsequently compositing them into the master environment using standard NLE software.

5. How can I alter the character's emotional state (e.g., from neutral to screaming) without losing their identity?

The identity preservation algorithm is structurally designed to allow for expression manipulation. By injecting strong emotional descriptors into your prompt (e.g., 'expression of sheer terror, wide eyes, screaming mouth'), the network utilizes the locked topological baseline and mathematically extrapolates the required muscle contractions, allowing the character to emote dynamically while remaining fundamentally recognizable.

6. What are the optimal specifications for the initial 'Base Anchor' reference image?

For supreme model stability, the Base Anchor must be a lossless PNG or high-quality JPG, strictly under the 10MB saturation limit. The subject must be well-illuminated with diffused lighting (no harsh, obscuring shadows), facing directly into the camera lens with a neutral expression. Supplying a heavily stylized, low-resolution, or severely angled reference image will catastrophically compromise all subsequent consistent generations.

Conclusion

The engineering reality within modern visual narrative production is irrefutable: attempting to construct a compelling, serialized commercial campaign or cinematic universe relying on randomized, fluctuating AI outputs guarantees catastrophic audience disengagement and severe brand dilution. By migrating your pre-production supply chain directly to our structurally flawless game asset generator and consistency protocols, you permanently mathematicalize your project's visual continuity and global market readiness. We guarantee absolute resistance to character mutation and unlock rapid speed-to-market for your entire storyboard portfolio.

Do not compromise your narrative integrity with substandard, erratic visual assets. Secure your entire cinematic pipeline by upgrading your algorithmic capabilities today. Access our advanced Pricing and Credit Plans to instantly mathematicalize your continuous narrative output, drastically lower your production overhead, and fundamentally revolutionize your studio's storytelling trajectory.